What if the software on your computer could complete complex tasks nearly as reliably as a human? That’s no longer science fiction — it’s the documented reality of AI agents in 2026. According to Stanford University’s just-released 2026 AI Index Report, AI agents now achieve a 66.3% success rate on real-world computer tasks — up from just 12% in 2025. In a single year, the performance gap between AI agents and humans on these benchmarks has nearly closed.

For entrepreneurs, developers, and business leaders, this isn’t just an impressive statistic. It’s a strategic signal that AI agents 2026 are no longer experimental technology. They are operational tools ready for deployment across core business workflows. The question has shifted from “can AI agents do real work?” to “which parts of my operations should they handle now?” In this post, we break down the Stanford findings, explore what’s fueling the leap, and outline what it means for your organization today.

The Stanford AI Index 2026: What the Numbers Actually Mean

Stanford’s HAI (Human-Centered Artificial Intelligence) institute publishes its annual AI Index to rigorously benchmark progress across the field. The 2026 edition landed with a headline finding that resonated across the entire tech industry: on OSWorld — a widely accepted benchmark that tests AI agents on real computer tasks spanning operating systems, browsers, file management, and productivity apps — accuracy climbed from roughly 12% in 2025 to 66.3% in 2026. That places AI agents within just six percentage points of average human performance on the same tests.

The gains extend well beyond a single benchmark. On Terminal-Bench, which measures task completion in command-line environments, success rates jumped from 20% to 77.3%. In specialized cybersecurity task evaluations, agentic AI systems now solve problems 93% of the time — up from 15% just two years ago. These aren’t marginal, incremental improvements. They represent a fundamental step-change in what AI systems can reliably execute without human assistance. As Unite.AI’s analysis of the report puts it, the field is “racing ahead of its guardrails” — capability gains are clearly outpacing the governance frameworks being built to manage them.

What’s Driving the Leap in Autonomous AI Agent Capabilities?

The jump from 12% to 66% in a single year didn’t happen by accident. Several converging factors explain the dramatic improvement in autonomous AI agent performance throughout 2025 and early 2026.

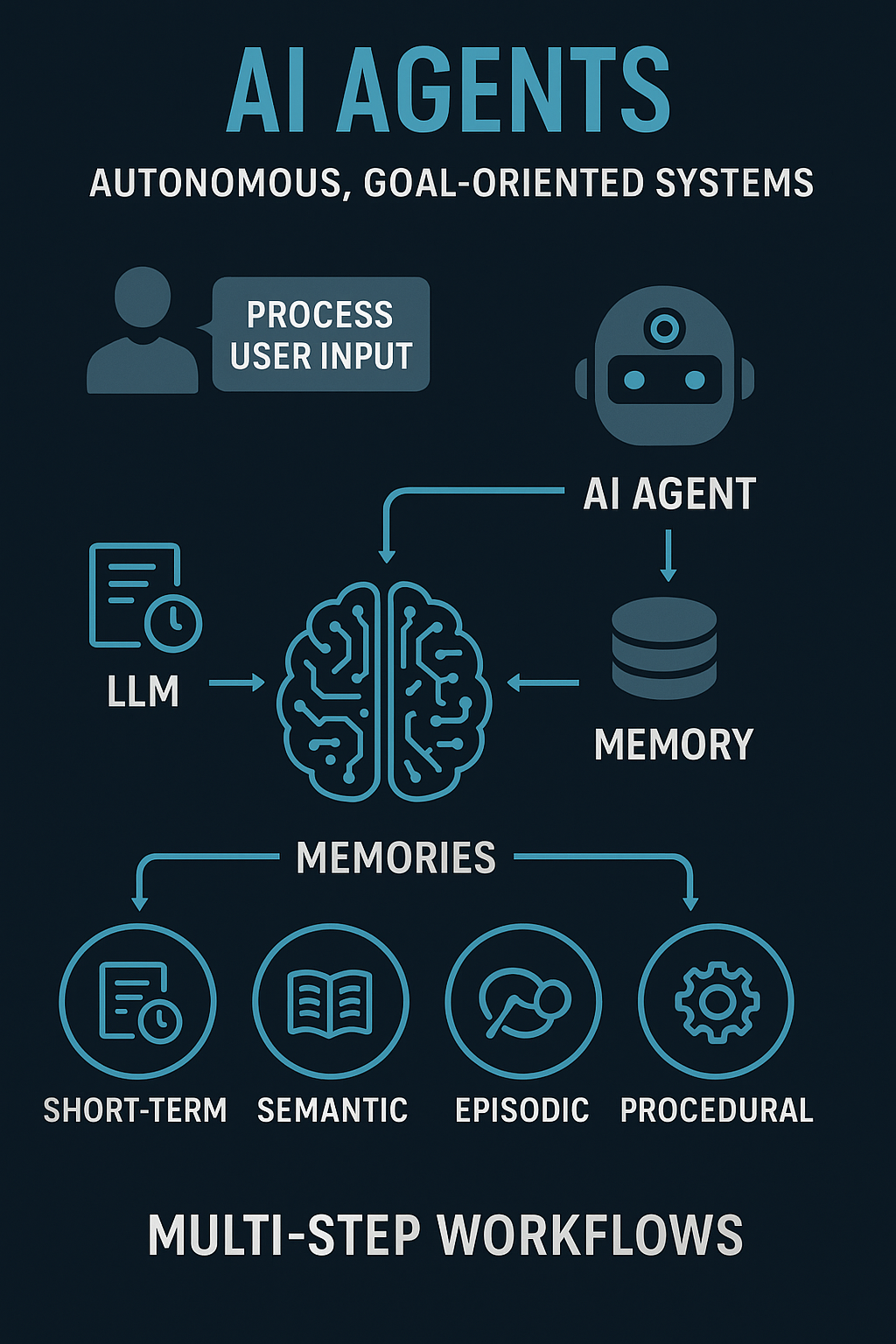

Better reasoning models. The underlying language models powering AI agents have become dramatically more capable at multi-step planning, error recovery, and self-correction. Where earlier models would fail on step three of a ten-step task, today’s reasoning-optimized models identify when something goes wrong and backtrack mid-execution — a quality that was nearly impossible to achieve reliably 18 months ago.

Persistent memory and context management. Agents can now track what they’ve done, what failed, and adjust strategy accordingly — mimicking the contextual awareness that makes human workers effective across sessions. Understanding how to pair memory architecture with smart prompt engineering for AI agents has become a foundational skill for teams building production systems.

Multi-agent orchestration. Solo agents are giving way to coordinated systems where specialized sub-agents handle discrete tasks and pass structured context between each other. This division of cognitive labor mirrors how high-performing human teams operate — and it’s a core reason why complex, multi-step workflows are now within reach. NVIDIA’s open-source Agent Toolkit, adopted by Adobe, Salesforce, SAP, and over a dozen other enterprise platforms, is helping standardize how these orchestration layers are built.

Expanding tool integrations. The number of APIs, MCP servers, and native integrations available to AI agents has exploded, giving them the ability to interact with the real digital environment — not just generate text about it.

How AI Agent Task Automation Is Reshaping Business in 2026

Benchmark numbers matter because they translate directly into real-world business impact. AI agent task automation is no longer confined to simple rule-based scripts — it’s taking over workflows that previously demanded human judgment and contextual reasoning.

In customer operations, autonomous agents now handle ticket triage, routing, and first-response resolution — with organizations reporting 50–70% reductions in response time, according to UiPath’s 2026 Automation Trends Report. In software development, agentic coding tools autonomously write, test, debug, and deploy code across entire features. In finance and procurement, agents are authorized to approve routine purchase orders, reconcile accounts, and flag anomalies without a human in the loop for standard transactions.

Gartner’s latest survey data shows that 42% of companies plan to deploy AI agents within the next 12 months. But Gartner also predicts that over 40% of those deployments will struggle by end of 2027, primarily due to integration complexity, security gaps, and scope that’s too vague. The implication is clear: the opportunity is enormous, but execution quality is what separates leaders from laggards. Selecting the right AI agent tools for your specific business context is as strategically important as the decision to adopt them in the first place.

The Road Ahead: Governance, Risk, and the 34% Gap That Still Matters

It would be a mistake to read the Stanford data as a green light for unchecked AI agent deployment. That remaining 34% failure rate on OSWorld tasks is significant — it means AI agents still fail on roughly one in three real-world computer tasks under rigorous test conditions. In enterprise settings with high-stakes workflows, that failure rate demands thoughtful design: fallback mechanisms, human oversight checkpoints, and audit trails built into every workflow from the start.

Governance frameworks are emerging to address this gap. MetaComp’s recently launched StableX Know Your Agent (KYA) framework is the first purpose-built governance system for AI agents operating in regulated financial services — covering payments, compliance, and wealth management workflows. Meanwhile, enterprise infrastructure vendors like SUSE are delivering agentic AI capabilities for data center and cloud management with security and compliance built in across Linux and Kubernetes distributions. The consistent message from industry leaders: agents need defined scope, clear escalation paths, and observability infrastructure — not just expanded capability.

Three Takeaways for Leaders Acting on This Now

The Stanford AI Index 2026 draws a clear line in the sand: AI agents have crossed from impressive demos into operational reality. Here’s what that means in practice for your organization.

The window for early-mover advantage is narrowing. The 12% → 66% jump happened in a single year — the next benchmark cycle may close the remaining gap entirely. Organizations not actively evaluating agent deployment are already falling behind competitors who are.

Capability without governance is a liability. The organizations that will benefit most from AI agents in 2026 are those building structured workflows with clearly defined scope, not handing agents open-ended mandates. Define boundaries. Set escalation triggers. Instrument for observability from day one.

The skills gap is the real bottleneck. The infrastructure is ready. Teams that invest now in understanding how to design, deploy, and supervise AI agent workflows will carry a durable competitive advantage as the technology continues its rapid maturation.

Ready to put AI agents to work in your business? Explore the full library of guides, tool reviews, and workflow blueprints at BigAIAgent.tech — and stay ahead of every major development in the agentic AI space.

What part of your workflow would you most want an AI agent to handle first? Share your thoughts in the comments below.